|

10/15/2020 Download Entire Website Mac Wget

Mar 19, 2014. Apr 17, 2020. Jul 15, 2020.

Nov 18, 2019. Use wget instead. Install it with Homebrew: brew install wget or MacPorts: sudo port install wget. For downloading files from a directory listing, use -r (recursive), -np (don't follow links to parent directories), and -k to make links in downloaded HTML or CSS point to local files (credit @xaccrocheur).

What does WGET Do?

Once installed, the WGET command allows you to download files over the TCP/IP protocols: FTP, HTTP and HTTPS.

If you’re a Linux or Mac user, WGET is either already included in the package you’re running or it’s a trivial case of installing from whatever repository you prefer with a single command.

Unfortunately, it’s not quite that simple in Windows (although it’s still very easy!).

To run WGET you need to download, unzip and install manually.

Install WGET in Windows 10

Download the classic 32 bit version 1.14 here or, go to this Windows binaries collection at Eternally Bored here for the later versions and the faster 64 bit builds.

Here is the downloadable zip file for version 1.2 64 bit.

If you want to be able to run WGET from any directory inside the command terminal, you’ll need to learn about path variables in Windows to work out where to copy your new executable. If you follow these steps, you’ll be able to make WGET a command you can run from any directory in Command Prompt.

Run WGET from anywhere

Firstly, we need to determine where to copy WGET.exe.

After you’d downloaded wget.exe (or unpacked the associated distribution zip files) open a command terminal by typing “cmd” in the search menu:

We’re going to move wget.exe into a Windows directory that will allow WGET to be run from anywhere.

First, we need to find out which directory that should be. Type:

path

You should see something like this:

Thanks to the “Path” environment variable, we know that we need to copy wget.exe to the

c:WindowsSystem32 folder location.

Go ahead and copy WGET.exe to the System32 directory and restart your Command Prompt.

Restart command terminal and test WGET

If you want to test WGET is working properly, restart your terminal and type:

wget -h

If you’ve copied the file to the right place, you’ll see a help file appear with all of the available commands.

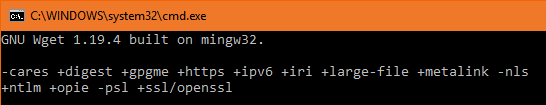

So, you should see something like this:

Now it’s time to get started.

Get started with WGET

Seeing that we’ll be working in Command Prompt, let’s create a download directory just for WGET downloads.

To create a directory, we’ll use the command

md (“make directory”).

Change to the c:/ prompt and type:

md wgetdown

Then, change to your new directory and type “dir” to see the (blank) contents.

Now, you’re ready to do some downloading.

Example commandsDownload With Wget

Once you’ve got WGET installed and you’ve created a new directory, all you have to do is learn some of the finer points of WGET arguments to make sure you get what you need.

The Gnu.org WGET manual is a particularly useful resource for those inclined to really learn the details.

If you want some quick commands though, read on. I’ve listed a set of instructions to WGET to recursively mirror your site, download all the images, CSS and JavaScript, localise all of the URLs (so the site works on your local machine), and save all the pages as a .html file.

To mirror your site execute this command:

wget -r https://www.yoursite.com

To mirror the site and localise all of the urls:

wget --convert-links -r https://www.yoursite.com

To make a full offline mirror of a site:

wget --mirror --convert-links --adjust-extension --page-requisites --no-parent https://www.yoursite.com

To mirror the site and save the files as .html:

wget --html-extension -r https://www.yoursite.com

To download all jpg images from a site:

wget -A '*.jpg' -r https://www.yoursite.com

For more filetype-specific operations, check out this useful thread on Stack.

Set a different user agent:

Some web servers are set up to deny WGET’s default user agent – for obvious, bandwidth saving reasons. You could try changing your user agent to get round this. For example, by pretending to be Googlebot:

wget --user-agent='Googlebot/2.1 (+https://www.googlebot.com/bot.html)' -r https://www.yoursite.com

Wget “spider” mode:

Wget can fetch pages without saving them which can be a useful feature in case you’re looking for broken links on a website. Remember to enable recursive mode, which allows wget to scan through the document and look for links to traverse.

wget --spider -r https://www.yoursite.com

You can also save this to a log file by adding this option:

wget --spider -r https://www.yoursite.com -o wget.log

Enjoy using this powerful tool, and I hope you’ve enjoyed my tutorial. Comments welcome!

You can automate this task through a simple command line utility called Wget. With the help of some scripts or applications and this tool, the article will show you how to save multiple websites into a PDF file.

There are many online tools, browser extensions and desktop plugins to turn websites into PDFs. If you often use these tools, you may encounter situations where you need to convert multiple links in one go. Doing this for each link is a waste of time and tedious.

You can automate this task through a simple command line utility called Wget. With the help of some scripts or applications and this tool, the article will show you how to save multiple websites into a PDF file.

How to use Wget to convert multiple websites into PDF

Why choose Wget?

Wget is a free software package for downloading files from the web. But it is also a perfect tool for mirroring entire websites to computers. Here are the reasons why Wget should be chosen:

Install Wget On macOS

The fastest way to install Wget is through Homebrew. Homebrew is a package manager for macOS, which installs useful Unix applications and utilities. Refer to the article: How to install and use wget on Mac for more details. Then type:

You will get real-time installation of all tools (if any) for Wget to run on your Mac. If you already have Homebrew installed, be sure to run brew upgrade for the latest version of this utility.

On Windows 10

There are multiple versions of Wget available for Windows 10. Go to the Eternally Board to download the latest 64-bit build. Place the executable file in a directory and copy it into drive C :.

Now, we will add the Wget path to the system environment variable to run this tool from any directory. Navigate to Control Panel> System and click Advanced System Settings . In the window that opens, click Environment Variables .

Select Path in System Variables and click Edit. Then click the New button located in the upper right corner of the window. Enter C: wget and click OK.

Open Command Prompt and type wget-h to check if everything works. In PowerShell, type wget.exe -h to download the Wget help menu.

Save the link in a text file

Because when dealing with many links, pasting each one is a difficult task. Thankfully, there are browser extensions that can help you accomplish this task.

Set up a directory

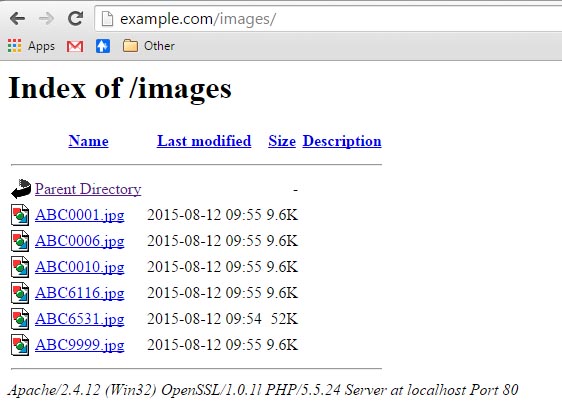

Wget works like a web crawler by extracting website assets from HTML files, including JavaScript files, logos, fonts, image thumbnails and CSS. Wget also tries to create a directory structure like the remote server. Create a separate directory for Wget downloads to save web pages and also to avoid clutter.

On Mac Terminal or in the Windows Command Prompt, type:

This step creates a new folder in the Home folder . You can name it whatever you want for it. Next, type:

Wget Download File

Change directory. This changes the current working directory to Wgetdown.

Details of the Wget commands

After creating the directory, we will use the actual Wget command:

Wget uses GNU getopt to handle command line arguments. Each option has 2 versions, one long one short. The long option is convenient to remember but takes time to type. You can also combine different types of options. Let's dive into the details of these options:

Put the commands into use

To show these commands in practice, consider using a website called Writing Workflows (link: https://processedword.net/writing-workflows/index.html# ). This guide includes a table of contents with links to individual chapters. The ultimate goal is that you want to create a separate PDF of those sections.

Step 1 : Open Terminal and create a new folder, as discussed above.

Step 2 : Use the Link Klipper extension to save the links as a text file. Save the file to the Downloads folder .

Step 3 : While you use the Wgetdown folder, enter:

Step 4 : Press

Enter . Wait for the process to complete.

Step 5 : Navigate to the Wgetdown folder . You will see the processedword.net directory of the main domain with all of the site's assets and chapter1.html.

Convert HTML to PDF

Converting a website into PDF is quite simple. But letting them look like the original site is a daunting task. The creation of a desired outcome depends on:

Wget Download WebpageWindows 10

PrinceXML is a fast application for converting HTML files to PDF. It allows you to type, format, and print HTML content with configurable layouts and supports web standards. It comes with many useful fonts and also allows you to customize the PDF output. This application is free for non-commercial use only.

MacOS

On a Mac, you can create an Automator service to convert a batch of HTML files to PDF. Open Automator and create a Quick Action document. Set service options to receive files or folders from Finder. Next, drag in Run Shell Script and set the Pass input option as an argument. Then, paste this script into the body:

Save the file as HTML2PDF.

Now, select all the HTML files in the Finder. Right click and select Services> HTML2PDF . Wait a moment to convert all the files.

At first glance, the steps involved in converting many websites into PDFs seem complicated. But once you understand the steps and procedures, this will save time in the long run. You don't need to spend any expensive web sign-up or PDF converter.

If you're looking to turn a web page into a PDF, read the article: Save the entire site's content as a PDF for more details.

Hope you are succesful.

READ NEXT»

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

December 2020

Categories |

RSS Feed

RSS Feed